The Robots.txt Mistakes That Are Silently Killing Your SEO (I Learned Some of These the Hard Way)

Table of Contents

ToggleI want to tell you about a Thursday afternoon that still makes me cringe a little.

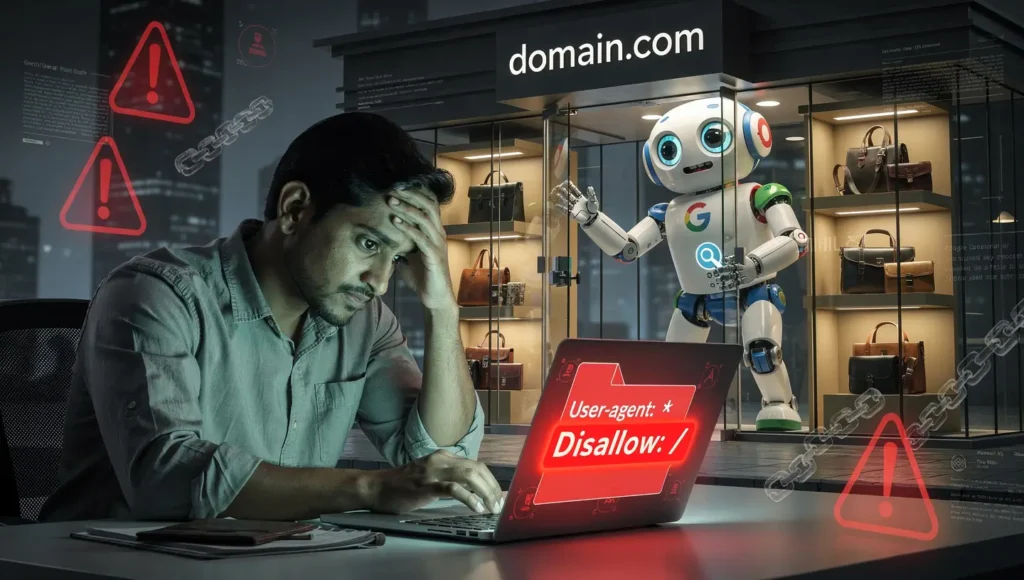

A client — a mid-sized e-commerce brand selling handmade leather goods — had been live for six weeks. Rankings were flat. Organic traffic was essentially zero. We’d done everything right: solid keyword research, clean site structure, decent backlinks from niche directories. The product pages were genuinely good.

I opened their robots.txt on a whim. Two lines. That’s all that was there.

User-agent: *

Disallow: /Six weeks. Their developer had blocked the entire site during staging, launched it to the world, and never changed those two lines. Googlebot had been politely knocking on the door for a month and a half, and those two lines had been telling it to go away.

We fixed it in under three minutes. Their impressions in Google Search Console started climbing within a week.

Here’s what haunts me about that story: they had no idea. No error message. No warning. No notification of any kind. Their robots.txt file was quietly, invisibly sabotaging everything — and it would have kept doing so indefinitely if we hadn’t caught it by accident.

That’s what makes robots.txt so dangerous. When it’s wrong, it doesn’t yell at you. It just… doesn’t work.

So let’s fix that. This guide covers every major robots.txt mistake I’ve seen in the wild — not just in theory, but from real audits, real sites, and real SEO disasters that were 100% preventable.

First, a 60-Second Explanation of What Robots.txt Actually Does

If you’re already confident on the basics, skip ahead. But if there’s even a small chance you’re fuzzy on this, stay with me for a minute — because the mistakes make a lot more sense once the foundation is clear.

A robots.txt file is a plain text file that lives at the root of your website — for example, yoursite.com/robots.txt. It uses a straightforward set of directives to communicate with search engine crawlers like Googlebot and Bingbot, telling them which parts of your site they can access and which parts to leave alone.

The basic structure of a robots.txt file

A clean, working robots.txt looks like this:

User-agent: *

Disallow: /wp-admin/

Allow: /wp-admin/admin-ajax.php

Sitemap: https://yoursite.com/sitemap.xmlUser-agent: *means “this rule applies to all crawlers”DisallowandAllowcontrol which URLs bots can visitSitemappoints crawlers to all your important pages

The one distinction that changes everything

Robots.txt controls crawling not indexing. These are two completely different things, and confusing them is one of the most common mistakes in the list below. Keep that in your head as you read on.

8 Robots.txt Mistakes Killing Your SEO

#1 Disallow All

Blocks your entire site from Google.

#2 Blocking CSS/JS

Google sees a broken version of your site.

#3 Disallow ≠ Noindex

Pages still appear in search results.

#4 Syntax Errors

One small mistake can break everything.

#5 Missing Sitemap

You’re not guiding Google properly.

#6 Admin-Ajax Block

Breaks frontend functionality.

#7 Bot-Specific Rules

Wrong targeting = lost traffic.

#8 Migration Issues

Old rules silently block new pages.

Mistake #1: The "Disallow All" Trap That Takes Sites Off Google Completely

We already met this one in the opening story, but it deserves its own section because it’s shockingly common.

The Disallow: / instruction — paired with User-agent: * — tells every crawler on the internet to stay away from every single page on your site. It’s the nuclear option. And it’s exactly what you want during development, when you’re building something that shouldn’t appear in search results yet.

The problem is the “yet” part. Developers set this up, launch the site, and forget to undo it.

User-agent: *

Disallow: /If your live site has these exact two lines, stop reading right now and go fix it.

Why developers leave this in place after launch

It’s not negligence — it’s workflow. Most developers use a staging block to prevent Google from indexing an unfinished site, which is smart. But launch-day checklists often skip the robots.txt step entirely. One missed item, weeks of invisible damage.

How to fix a “disallow all” robots.txt

Change your file to this:

User-agent: *

Disallow:An empty Disallow value means “allow everything.” Or remove the line entirely. Then open Google Search Console, submit your sitemap, and request indexing for your most important pages to speed up recovery.

Add “verify robots.txt is not blocking everything” to your pre-launch checklist permanently. Tattoo it somewhere if that’s what it takes.

Mistake #2: Blocking CSS and JavaScript Files (and Showing Google a Broken Version of Your Site)

Here’s something that surprises a lot of people: Google doesn’t just read text. It renders your entire page — CSS, JavaScript, fonts, images — exactly the way a browser would. If your robots.txt is blocking those resource files, Googlebot is experiencing a broken, half-functional version of your site.

And broken sites don’t rank.

Why blocking resources used to be recommended

Up until around 2014, blocking CSS and JS was actually standard advice. The reasoning made sense then — why waste Googlebot’s crawl budget on stylesheet files? But once Google started fully rendering pages, blocking resources became a liability rather than an efficiency gain.

Google’s own documentation is explicit: preventing Googlebot from crawling CSS and JavaScript will likely affect how well their algorithms render and index your content. That’s not a maybe. That’s a direct statement from the source.

Signs that resource blocking is hurting your site

- Your site looks perfectly fine to users, but Google shows odd snippets or missing content in search results

- The URL Inspection Tool in Google Search Console shows a very different rendered version than what users see

- Pages aren’t getting indexed despite being technically sound in every other way

How to fix resource blocking in robots.txt

Remove any Disallow directives targeting folders like /assets/, /css/, /js/, or /fonts/. Then run the URL Inspection Tool on a few key pages to confirm Google can now render them correctly. That screenshot it shows you is worth a thousand words.

Mistake #3: Confusing Disallow With Noindex (They Do Completely Different Jobs)

This is probably the most misunderstood concept in all of robots.txt — and it trips up beginners and experienced SEOs alike.

Here’s the assumption people make: “If I put Disallow: /page/ in my robots.txt, Google won’t show that page in search results.”

That’s not what happens.

What disallow actually does (and doesn’t do)

When you disallow a URL, you’re telling crawlers not to visit it. But Google can still discover that URL through external links and include it in search results — as a blank entry with no title and no description. You’ve seen those results. They’re useless to users and embarrassing for the site owner.

Worse: Google can’t read the noindex tag on a page it’s not crawling. So if your goal was to hide a page from search results, disallowing it actually makes things less predictable, not more.

The real difference between disallow and noindex

| Goal | Correct tool |

|---|---|

| Stop Googlebot from crawling a page | Disallow in robots.txt |

| Stop a page from appearing in search results | noindex meta tag in HTML |

| Do both | Use noindex — don’t block crawling |

How to add a noindex meta tag

Place this inside the <head> of any page you don’t want indexed:

<meta name="robots" content="noindex">Googlebot will crawl it, read the tag, and exclude it from search results. That’s the correct flow.

When disallow is actually the right choice

Use Disallow for pages you genuinely don’t want crawled at all — admin panels, internal dashboards, staging environments, utility scripts. Not for pages you just want hidden from search results.

Mistake #4: Syntax Errors That Make Your Entire Robots.txt File Useless

Robots.txt syntax is deceptively strict. Get it wrong and crawlers don’t throw you an error message — they just silently ignore your rules, or worse, misinterpret them in ways you’d never expect.

The most common robots.txt syntax mistakes

Missing the space after the colon

Disallow:/private/ ← Wrong

Disallow: /private/ ← CorrectThat single missing space can cause parsers to reject the entire directive.

Using lowercase directive names

disallow: /admin/ ← Risky (some parsers are case-sensitive)

Disallow: /admin/ ← Always safeWriting comments without the # symbol

This blocks the admin area ← Confuses parsers

# This blocks the admin area ← CorrectStacking multiple user-agents on one line

User-agent: Googlebot, Bingbot ← Incorrect

User-agent: Googlebot ← Correct

User-agent: Bingbot ← Each on its own lineHow to validate your robots.txt before it causes damage

Don’t guess — validate. Google Search Console has a built-in robots.txt tester under Settings → Crawling. Paste any URL to check whether your current rules allow or block it. Run this check every single time you make a change to the file. It takes thirty seconds and has saved me from embarrassing mistakes more than once.

Screaming Frog is also excellent for catching blocked URLs at scale during a full site audit.

Mistake #5: Forgetting to Include Your Sitemap (You're Leaving Crawl Efficiency on the Table)

Your robots.txt file and your XML sitemap are natural partners. One tells crawlers where not to go — the other shows them exactly where to go. Using one without the other wastes an easy opportunity to guide Googlebot to your most important pages.

How the sitemap directive works

Adding your sitemap to robots.txt is a single line:

Sitemap: https://yoursite.com/sitemap.xmlThat’s it. Search engines that read your robots.txt — which most major ones do — will automatically discover and queue your sitemap for crawling. It doesn’t replace submitting your sitemap in Google Search Console, but it’s a useful belt-and-suspenders signal.

The sitemap mistakes that silently break this

- Pointing to an HTTP URL when your site is HTTPS

- Referencing a staging or dev domain that no longer exists

- Using a broken path that returns a 404

- Forgetting to update it after migrating to a sitemap index format

The fix: Check the URL in your Sitemap: directive right now. Paste it into your browser and confirm it loads a valid XML file. If you’re on WordPress, Yoast SEO and Rank Math add this automatically — but verify it’s pointing to the correct, live URL.

Mistake #6: The WordPress Admin-Ajax Problem Nobody Warned You About

Most WordPress guides will tell you to block /wp-admin/ in your robots.txt. That’s correct — you don’t want Googlebot crawling your dashboard.

But here’s what almost nobody mentions: /wp-admin/admin-ajax.php is not a dashboard file. It’s used by the frontend of your site for dynamic functionality — live search, cart updates, AJAX-powered content loading. If it gets caught in your admin block, interactive features break for both users and crawlers.

The correct WordPress robots.txt configuration

User-agent: *

Disallow: /wp-admin/

Allow: /wp-admin/admin-ajax.php

Sitemap: https://yoursite.com/sitemap.xmlThat single Allow exception makes a meaningful difference. It’s a small detail — but small details are exactly what technical SEO is made of.

What to check on other CMS platforms

If you’re not on WordPress, do the equivalent audit. Review your Disallow rules and ask: is any of this accidentally catching a file that the frontend depends on? Admin panels, dashboards, and utility scripts are fair game. Frontend dependencies are not.

Mistake #7: Adding Bot-Specific Rules Without Fully Understanding the Consequences

It’s tempting to get sophisticated with your robots.txt by writing rules for individual crawlers — Bingbot, DuckDuckBot, GPTBot, and the rest. Sometimes this is exactly the right move. Often it causes more problems than it solves.

When bot-specific rules go wrong

Here’s a real configuration I audited once:

User-agent: Googlebot

Disallow: /images/The intent was crawl budget savings. The actual result: Google Image Search stopped indexing every photo on the site — on a brand whose entire identity was visual. That “efficiency” measure cost them a significant traffic channel for months before anyone connected the dots.

Another common failure: blocking a scraper bot by user-agent string, getting the string slightly wrong, and having the rule do absolutely nothing while the content being “protected” remains fully accessible.

The rule that saves most people from themselves

Unless you have a specific, well-researched reason to target a named crawler, stick to one block:

User-agent: *This covers the vast majority of use cases. Add named bot rules only when you can clearly articulate why, what the consequence is, and how you’ll verify it’s working.

Mistake #8: Never Updating Robots.txt After a Site Migration

This is the time-bomb mistake. It doesn’t break things immediately — it detonates three months later when you’ve completely forgotten what changed.

Why migrations make old robots.txt rules dangerous

When you move a site — new domain, HTTP to HTTPS, URL restructuring, subdirectory to root — your existing Disallow rules can become outdated instantly. They might now be blocking pages that are central to your new architecture, or protecting paths that no longer even exist.

I audited a client’s site a couple of years ago that had moved all blog posts from /blog/post-name/ to /post-name/ at the root level. Clean move, cleaner URLs. Except their robots.txt still had:

Disallow: /blog/The old paths were 301 redirects. The new paths were unblocked. But three months of stagnant organic traffic told a different story. The fix took ten minutes once we found it. Finding it took three months.

What to check after every site migration

- Update any

Disallowpaths to match your new URL structure - Confirm your

Sitemap:URL is pointing to the new domain with HTTPS - Remove rules for paths that no longer exist

- Test a sample of your most important URLs in the GSC robots.txt tester

Make this step part of your migration checklist, right alongside your redirect mapping. It’s not glamorous. It’s completely necessary.

How to Check Your Robots.txt Right Now (Takes Less Than 5 Minutes)

You don't need to wonder whether your robots.txt is healthy. Here's a quick four-step process you can do right now.

Step 1: Direct browser inspection

Go to yoursite.com/robots.txt in any browser. Read what’s there. Does anything look immediately wrong — a Disallow: / sitting at the top, for instance? This is your fastest sanity check.

Step 2: Google Search Console robots.txt tester

In GSC, go to Settings → Crawling → robots.txt. Paste in specific URLs you care about — your homepage, top product pages, blog posts — and confirm Googlebot is allowed to access them under your current rules.

Step 3: URL Inspection Tool for rendering issues

Still in Google Search Console, use the URL Inspection Tool on a few key pages. It shows you a screenshot of exactly how Google renders your page. If JavaScript or CSS is being blocked, you’ll see a broken version — and you’ll know exactly what to fix.

Step 4: Screaming Frog crawl for bulk blocked-URL discovery

If you’re doing a full audit, run Screaming Frog and filter results by “Blocked by robots.txt.” You might find pages in that list that have no business being there. This is where the quiet, long-term damage often hides.

Do this quarterly at minimum. Monthly if your site changes frequently.

A Clean Robots.txt Template You Can Actually Use

Here's a solid starting point for most websites — copy, adjust, and go.

Standard site template

User-agent: *

Disallow: /wp-admin/

Allow: /wp-admin/admin-ajax.php

Sitemap: https://yoursite.com/sitemap.xmlE-commerce site template

User-agent: *

Disallow: /wp-admin/

Allow: /wp-admin/admin-ajax.php

Disallow: /cart/

Disallow: /checkout/

Disallow: /my-account/

Disallow: /?s=

Disallow: /search/

Sitemap: https://yoursite.com/sitemap.xmlThe e-commerce version keeps Googlebot away from cart and checkout pages (low-value, session-specific) and internal search results (near-infinite duplicate content). Every Disallow rule here has a clear reason behind it.

Keep it purposeful. Every line should be there for a reason you can explain out loud.

The Bottom Line: Robots.txt Is Your SEO Foundation, Not a Bonus Step

Here’s the perspective I always come back to.

Your robots.txt file won’t rank you on page one. It’s not a growth tool. But it is — without question — a protection tool. When it’s working correctly, all the SEO work you’re doing elsewhere gets a fair chance to succeed. When it’s broken, you might be pouring months of effort into a campaign that Googlebot is being quietly told to ignore.

Think of it like the electrical wiring in a house. Nobody brags about their wiring. But without it, none of the lights come on.

Get the wiring right first. Then build everything else on top of it.

FAQ: Common Robots.txt Questions Answered

What exactly is a robots.txt file and what does it do?

A robots.txt file is a plain text file stored at the root of your website that tells search engine crawlers which pages they should and shouldn’t visit. It follows the Robots Exclusion Protocol — an internet standard that well-behaved bots respect. It controls crawling behaviour, not indexing directly.

How do I use robots.txt for SEO without hurting my rankings?

Use it to prevent crawling of genuinely low-value pages — admin panels, checkout pages, internal search results, and duplicate content. Always include your sitemap URL. Never block CSS, JavaScript, or media files. Validate your file in Google Search Console after every single change.

Where do I find the robots.txt file on my website?

Type https://yourdomain.com/robots.txt directly into your browser. If the file exists, you’ll see plain text. A 404 error means the file either doesn’t exist or isn’t placed at the root of your domain (it must live there — not in a subdirectory).

How do I set up robots.txt correctly on WordPress?

Plugins like Yoast SEO and Rank Math generate and manage robots.txt automatically. You can also edit it manually by accessing your site’s root directory via FTP. For most WordPress sites, the configuration in this guide — with the wp-admin block and the admin-ajax.php exception — is the right baseline to start from.

What is the real difference between robots txt disallow and a noindex tag?

Disallow in robots.txt tells a crawler not to visit the page at all. A noindex meta tag in the page’s HTML tells a crawler not to include that page in search results. They do different jobs. You cannot effectively use noindex on a page you’ve disallowed — the crawler never reads the tag if it’s not allowed to crawl the page.

Is a robots.txt file actually required for SEO?

Not strictly. You can have a perfectly healthy site without one. But it’s best practice to have one — it’s where you declare your sitemap, communicate crawling preferences explicitly, and signal technical competence to bots. Even a minimal, permissive file is better than no file.

How do robots.txt rules work when there are multiple user-agent entries?

Each User-agent: line specifies which bot the following rules apply to. Rules for a named bot like Googlebot take precedence over the wildcard * for that specific crawler. If no named rule exists for a bot, it falls back to the User-agent: * block. For most sites, one wildcard block is all you’ll ever need.

Can I block all search engines at once with robots.txt?

Yes — User-agent: * followed by Disallow: / blocks every crawler from crawling your entire site. This is appropriate during development. It’s catastrophic if accidentally left live after launch. Go check your live robots.txt right now if you’re not 100% sure what’s in it.